The insurance industry has always been built on data-driven decision-making, particularly in underwriting and pricing. But today, the firms that gain access and analyse the growing volume of data available at the underwriting stage have a clear competitive advantage.

For decades, pricing models were constrained by computing power, storage costs and the practical limits of spreadsheets. Today, those constraints have largely disappeared.

Insurers now have access to vast quantities of information - from detailed claims histories and catastrophe models to satellite imagery and external data feeds. At the same time, cloud computing has made storage and processing power much less expensive and more scalable.

Pricing is no longer a small-data exercise. It has become a big-data problem - and one that requires tools and processes very different from those the industry has traditionally relied on.

Today’s winners are already adapting to this reality. Others are not. As insurance rates continue soften, insurers need to act to improve their pricing and underwriting accuracy.

The limits of traditional pricing

Despite the explosion in available data, many insurers still rely on traditional workflows designed for a far simpler analytical environment where a hard market in insurance allowed a less accurate pricing approach.

Legacy pricing models were built around relatively small datasets and deterministic approaches. They were designed to run in spreadsheets and deliver results quickly enough for underwriters to use in day-to-day decisions.

That has now changed as the markets have softened, and accurately pricing today’s complex insurance and reinsurance structures requires modelling that includes and generates large data.

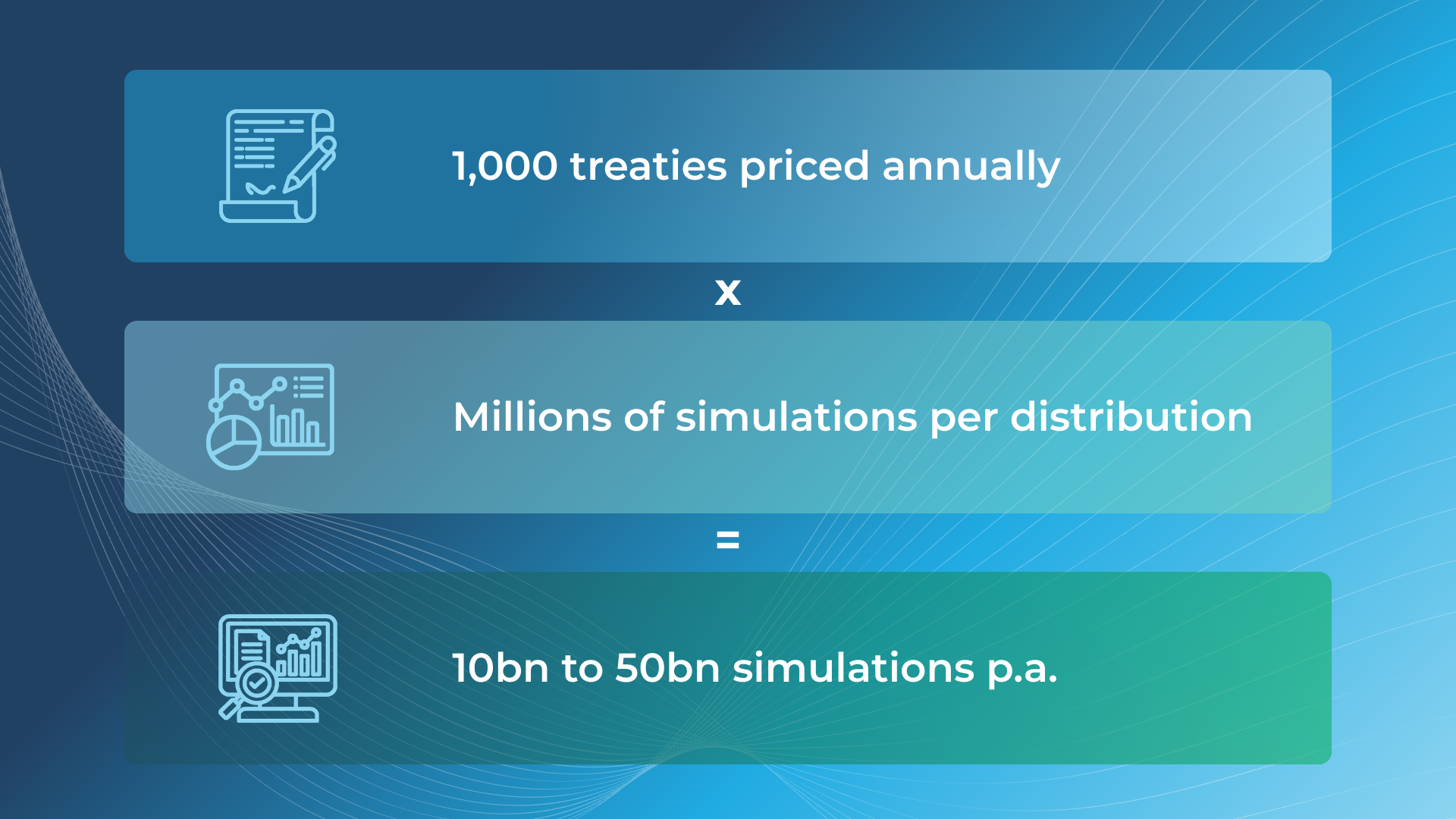

For reinsurers that run simulations for pricing, the data requirement is even larger. The more simulations that are run, the more stable and reliable the results become - particularly when modelling extreme or longer-tail risks.

Consider excess-of-loss reinsurance. Properly modelling reinstatements, catastrophe events and extreme loss scenarios requires millions, of simulations for a single contract.

Scale that across an organisation that is pricing hundreds or thousands of treaties each year and the computational demands grow rapidly. Billions of simulations required annually, generating vast amounts of new data.

Spreadsheets were never designed for that scale of analysis.

Pricing is now a data infrastructure problem

The challenge insurers face is therefore not only analytical - it is structural.

Modern pricing depends on the ability to manage large datasets, run high-volume simulations and integrate information from multiple systems. Achieving this requires modern data infrastructure: cloud platforms, analytical databases and scalable computing environments.

Yet in many organisations, pricing teams still operate in fragmented technology environments.

Data moves (often manually) between underwriting systems, catastrophe models, pricing tools and reporting platforms - often with human intervention and undocumented changes along the way. As a result, actuaries and analysts spend significant time cleaning and reconciling data rather than analysing it.

The industry now collects enormous volumes of information, but too often struggles to convert it into insight quickly enough to influence decisions.

The underwriting gap

There is also a more practical problem.

Underwriters sit at the centre of the pricing process. They provide critical information about clients, exposures and deal structures. Yet some actuarial systems were not designed with underwriters in mind, as strange as that may seem.

If pricing tools are slow, opaque or difficult to use, underwriters will naturally revert to familiar methods - typically spreadsheets and personal judgement.

This creates a feedback problem. Pricing models rely on high-quality input data, but if underwriters aren’t able to use them easily, or see little value in the pricing outcomes, they are far less likely to engage with them.

Modern pricing platforms recognise this reality. They are designed not only to produce better models, but to manage the large data and allow easier underwriting access directly into these complex pricing processes.

What modern pricing looks like

Insurers modernising their pricing capabilities are therefore focusing on systems, not just models.

Claims analysis for pricing is another area where progress is already visible. Advances in data processing and machine learning allow insurers to analyse the claims data for pricing far more quickly, identifying anomalies, emerging trends and potential fraud earlier.

Computing power is also transforming how complex contract structures are modelled. Reinsurance features such as reinstatements, loss corridors and layered programmes can now be simulated explicitly rather than approximated.

This matters because these structures often drive the economics of a contract. When they are modelled accurately, pricing becomes far more consistent, robust and understandable.

At the same time, newer analytical techniques are improving how loss distributions are fitted and simulated. Machine learning methods can help identify relationships across large datasets and support the modelling of complex risk distributions.

None of this replaces actuarial judgement - but it significantly expands the analytical toolkit available to pricing teams.

The rise of real-time information

Another shift is the growing role of real-time data.

Cloud infrastructure and APIs allow insurers to integrate external data sources directly into pricing workflows. Weather data, news feeds and other signals can be monitored continuously, helping underwriters identify emerging risks more quickly.

This capability is particularly valuable for pricing in fast-moving situations, such as geopolitical events as we are seeing at the moment in the Middle East or natural catastrophes like widespread wildfires, where decisions need to be made before the full picture is clear.

Instead of relying solely on historical information, insurers can increasingly run scenario analyses dynamically, incorporating new data as it emerges.

Accelerated underwriting

Artificial intelligence will accelerate these trends further.

Large language models and other AI systems are already capable of summarising complex documents, extracting information from unstructured data and identifying patterns that would be difficult for humans to detect at scale.

In underwriting, these tools can help analyse broker submissions, review historical claims information and surface relevant external risk signals.

Some underwriting decisions for lower-value risks are already being automated through agentic AI systems. But the larger opportunity right now lies in augmentation of underwriting rather than automation. Insurers depend on humans to make pricing decisions on complex risks – they are not comfortable delegating that fully to AI, but they do want underwriters to benefit from AI insight.

Underwriters are increasingly becoming decision-makers supported by systems capable of synthesising vast amounts of information in real time, to their benefit.

The strategic choice

The insurance industry is now at a turning point.

The technologies required to move beyond spreadsheet-based pricing - cloud computing, scalable data platforms, advanced analytics and AI - already exist and are now widely accessible.

The question is no longer whether insurers will adopt them. It is how quickly.

Firms that treat pricing as a strategic capability, supported by modern data infrastructure and integrated systems, will be better positioned to understand risk and deploy capital efficiently, focusing on the more profitable business.

Those that continue to rely on legacy processes risk making decisions with only a fraction of the available information - a serious disadvantage in an increasingly competitive market as rates soften.

Dani’s actuarial experience and passion are key. He is a strong advocate of innovation, optimism and communication, both within the team and for the clients. Dani’s ability and experience with data ensure that we always maximise value and efficiency for every project, enabling us to unlock hidden value for the clients business.