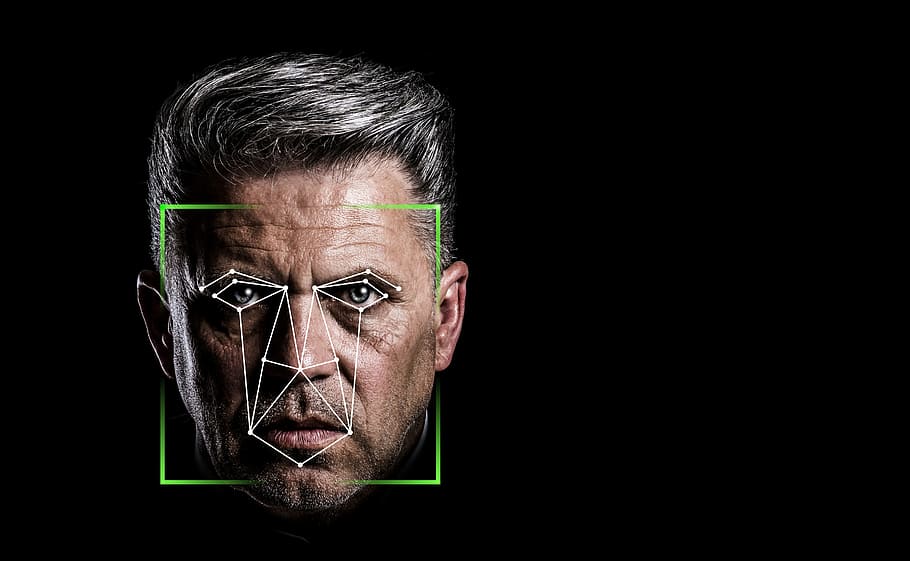

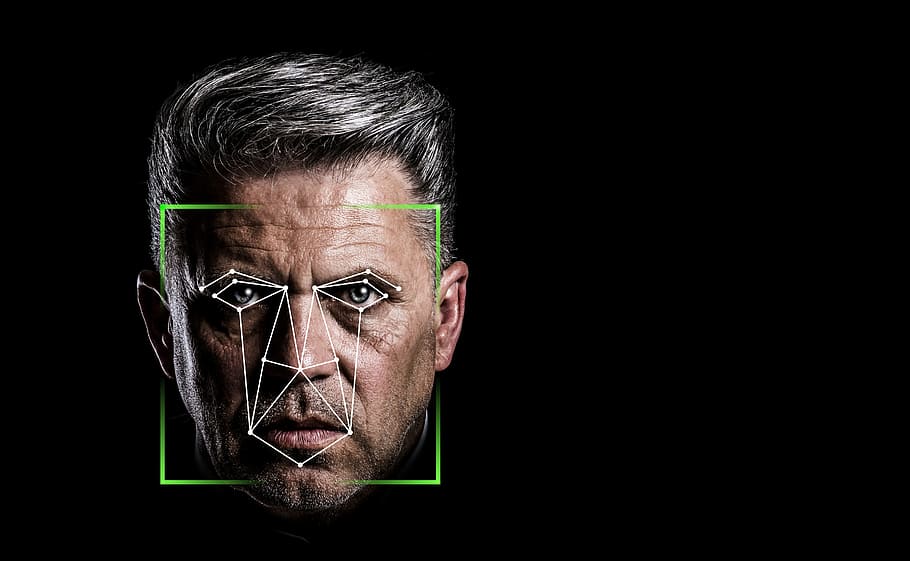

The debate over racial bias in tech has been renewed as a university in America claims it can “predict criminality” through facial recognition.

Researchers at Harrisburg University claim that they can “predict if someone is a criminal based solely on a picture of their face” through software “intended to help law enforcement prevent crime”.

One member from the Harrisburg research team in particular claimed that “Identifying the criminality of [a] person from their facial image will enable a significant advantage for law-enforcement agencies and other intelligence agencies to prevent crime from occurring.”

The university have said that this research would be included in a Springer Nature book, however, Springer have stated that this was “at no time” accepted, claiming that the research “went through a thorough peer preview process. The series editor’s decision to reject the final paper was made on Tuesday 16 June and was officially communicated to the authors on Monday 22 June”

Whilst the Harrisburg researchers claim their technology holds “no racial bias” through its operations, there has still been considerable backlash from this research - 1,700 academics signing an open letter demanding this research stays unpublished.

The Coalition for Critical Technology, and organisers of this open letter, have stated that “Such claims are based on unsound scientific premises, research, and methods, which numerous studies spanning our respective disciplines have debunked over the years.” and that “all publishers must refrain from publishing similar studies in the future”.

The group have raised attention to the distorted data that feeds this perception of what a criminal “looks like”, pointing to a number of studies that suggests harsher treatment for ethnic minorities throughout the criminal justice system.

Computer-science researcher at Cambridge University Krittika D’Silva commented: “It is irresponsible for anyone to think they can predict criminality based solely on a picture of a person’s face.”

“The implications of this are that crime ‘prediction’ software can do serious harm – and it is important that researchers and policymakers take these issues seriously”

D’Silva also points to the number of studies revealing machine-learning to hold various different biases “Numerous studies have shown that machine-learning algorithms, in particular face-recognition software, have racial, gendered, and age biases”

Harrisburg University have decided not to publish this paper on the facial recognition software, stating the news release that outlined the research, titled “A Deep Neural Network Model to Predict Criminality Using Image Processing” has been removed at the involved faculty’s request, and further that publication the research was going to appear in has since decided against this. The university state on their website:

“Academic freedom is a universally acknowledged principle that has contributed to many of the world’s most profound discoveries. This University supports the right and responsibility of university faculty to conduct research and engage in intellectual discourse, including those ideas that can be viewed from different ethical perspectives. All research conducted at the University does not necessarily reflect the views and goals of the University.”

Get rid of the cr…p and do something Cool!

Read the valuable insights offered by Buster Tolfree the Commercial Director – Mortgages at a Tip-Talks event co-sponsored by United Trust Bank and Optalitix.

The Magic of AI

Demystifying AI is a challenge facing most businesses as automation and machine learning shift to being a necessity. Read more about the magic of AI here.

Google’s AI Claims to Be Improving How It Recognises News and Misinformation

Google's Vice President, Pandu Nayak claims that Google is using AI to better understand news and misinformation. Read more about Nayak's theory in this guide.

DeepMind AI Explores How to Improve Chess

Deepmind is an AI company using machine learning to reinvent the game of chess as we know it, with the help of chess champion Vladimir Kramnik. Learn more here.

Dani Katz runs 18km as part of Optalitix Fundraising

Optalitix co-founder Dani Katz ran 18km as part of Optalitix’s fundraising efforts this summer. Read about how he did and the charities that Dani ran for here.

Optalitix Joins Google Cloud Partner Advantage Program

Market leaders in AI and machine learning solutions, Optalitix, announced it has joined the Google Cloud Partner Advantage Program as a Sell and Build partner.

AI Breakthrough Could Accelerate Machine Learning Development

Researchers achieved a new AI breakthrough whereby using light for performing computations can improve machine learning neural networks in speed and efficiency.

AI Used on Facebook and Twitter to Detect Foreign ‘Trolls’

Researchers claim to have trained a machine learning system to identify posts on social media that aim to manipulate political events. Read more in this guide.

Next Generation of AI Described as “Too Dangerous to Release” – Now Released

The Artificial intelligence tool Generative Pre-training Transformer (GPT-3) has gained much attention, but is the technology dangerous? Learn more now.

Elon Musk Predicts AI Will Overtake Humans in 5 Years

Elon Musk has recently claimed that Artificial Intelligence could overtake humans by 2025, and discussed these predictions in a New York Times interview.

Optalitix Named Amongst Start-up Elite in the TechRound100

Optalitix is a finalist in the TechRound100, ranking in the top 100 UK start-ups that have been praised for their innovation. Learn about this competition here.

Identifying Unwanted Bias in Machine Learning

With human-made models comes human-biases, machine learning models are inclined to reflect the biases of the team who built it and the data it is fed.

Concerns Rise Over AI Racial Bias as Facial Recognition “Predicts Criminality”

The debate over racial bias in tech has been renewed as a US university claims it can predict criminality through facial recognition. Learn more in this guide..

How Is AI Helping to Fight the Impacts of COVID-19?

AI and machine learning have become key tools in helping to tackle the pandemic, not just in understanding the virus but also how to support different industries.

The Top 5 Strangest Uses of AI

Whilst AI has transformed many essential areas of society, some have also begun to use its technology for the weirder. Take a look at some odd uses of AI here.