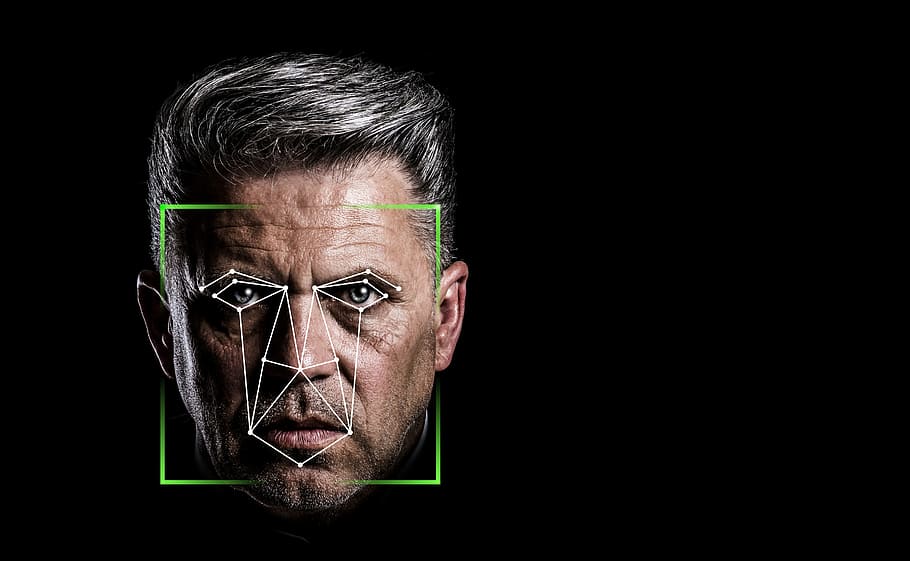

The debate over racial bias in tech has been renewed as a university in America claims it can “predict criminality” through facial recognition.

Researchers at Harrisburg University claim that they can “predict if someone is a criminal based solely on a picture of their face” through software “intended to help law enforcement prevent crime”.

One member from the Harrisburg research team in particular claimed that “Identifying the criminality of [a] person from their facial image will enable a significant advantage for law-enforcement agencies and other intelligence agencies to prevent crime from occurring.”

The university have said that this research would be included in a Springer Nature book, however, Springer have stated that this was “at no time” accepted, claiming that the research “went through a thorough peer preview process. The series editor’s decision to reject the final paper was made on Tuesday 16 June and was officially communicated to the authors on Monday 22 June”

Whilst the Harrisburg researchers claim their technology holds “no racial bias” through its operations, there has still been considerable backlash from this research - 1,700 academics signing an open letter demanding this research stays unpublished.

The Coalition for Critical Technology, and organisers of this open letter, have stated that “Such claims are based on unsound scientific premises, research, and methods, which numerous studies spanning our respective disciplines have debunked over the years.” and that “all publishers must refrain from publishing similar studies in the future”.

The group have raised attention to the distorted data that feeds this perception of what a criminal “looks like”, pointing to a number of studies that suggests harsher treatment for ethnic minorities throughout the criminal justice system.

Computer-science researcher at Cambridge University Krittika D’Silva commented: “It is irresponsible for anyone to think they can predict criminality based solely on a picture of a person’s face.”

“The implications of this are that crime ‘prediction’ software can do serious harm – and it is important that researchers and policymakers take these issues seriously”

D’Silva also points to the number of studies revealing machine-learning to hold various different biases “Numerous studies have shown that machine-learning algorithms, in particular face-recognition software, have racial, gendered, and age biases”

Harrisburg University have decided not to publish this paper on the facial recognition software, stating the news release that outlined the research, titled “A Deep Neural Network Model to Predict Criminality Using Image Processing” has been removed at the involved faculty’s request, and further that publication the research was going to appear in has since decided against this. The university state on their website:

“Academic freedom is a universally acknowledged principle that has contributed to many of the world’s most profound discoveries. This University supports the right and responsibility of university faculty to conduct research and engage in intellectual discourse, including those ideas that can be viewed from different ethical perspectives. All research conducted at the University does not necessarily reflect the views and goals of the University.”

What are the four types of artificial intelligence?

Reactive machines, theory of mind, limited memory, and self-awareness are four main types of AI categories to be aware of. Read more about them in this guide.

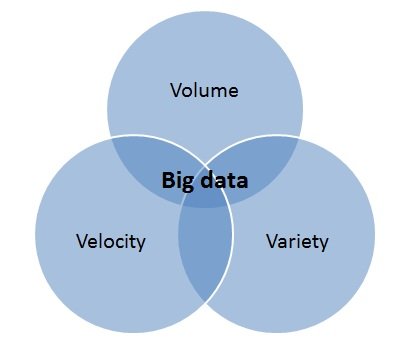

What are the 3 V's of big data?

What are the 3 v’s of big data? Find out more as the data experts at Optalitix explain what they mean by using examples to illustrate each of them.

How to Become a Data Scientist

When pricing a new product, the starting point is almost always Excel. Learn how to create a pricing application from a spreadsheet pricing model here.

Holiday AI Thoughts

The key to selling is to establish a relevant dialogue and understanding with your clients. Find out how AI systems can be used to target the right consumers.

Artificial Intelligence powered insurance is now inevitable!

AI is changing the competitive landscape for insurers by delivering a competitive edge in a number of areas. Read more about AI in insurance.

AI Accessible for all - Ignore at your peril!

As AI continues to develop, businesses of all sizes will need to engage with the technology and embrace the power of machine learning to improve their processes.

The Computable Game

Data analysis with Optalitix—an online underwriting tool offering advanced data-based services, including database management and analytics to insurers & bankers.

AI and machine learning in a GDPR environment.

GDPR refers to the EU’s upcoming data protection regime. Find out whether the anonymisation of data, as prescribed by GDPR, would break AI models and more here.

Cultural diversity drives better AI outcomes

Agile organisations that can respond to the global challenges of their often multinational customer base are often the ones that thrive. Read more in this guide.

Why cant lending be like Uber?

Lending is constantly changing with entrants, regulations and technology. Learn how technology can give businesses market leading edge like Uber in this guide.

Why are Insurers playing a guessing game?

Numerous insurers overlook advanced solutions. Make sure your company leverages valuable insights from its data to enhance the user experience with Optalitix!

The future of life insurance

In the past, choosing the right life insurance was an arduous task. Thanks to data analysis and algorithms, shopping for life insurance has become much simpler.

Insurance quote personalisation is magic!

Dani Katz talks about how Optalitix can improve every aspect of the insurance business through better use of data, quotations and more. Read more in this guide.

A meaningful connection. The next phase of API relationships.

Data analytics and API closely intertwine, but what is the next phase in their relationship? Learn more about this successful financial connection here.